|

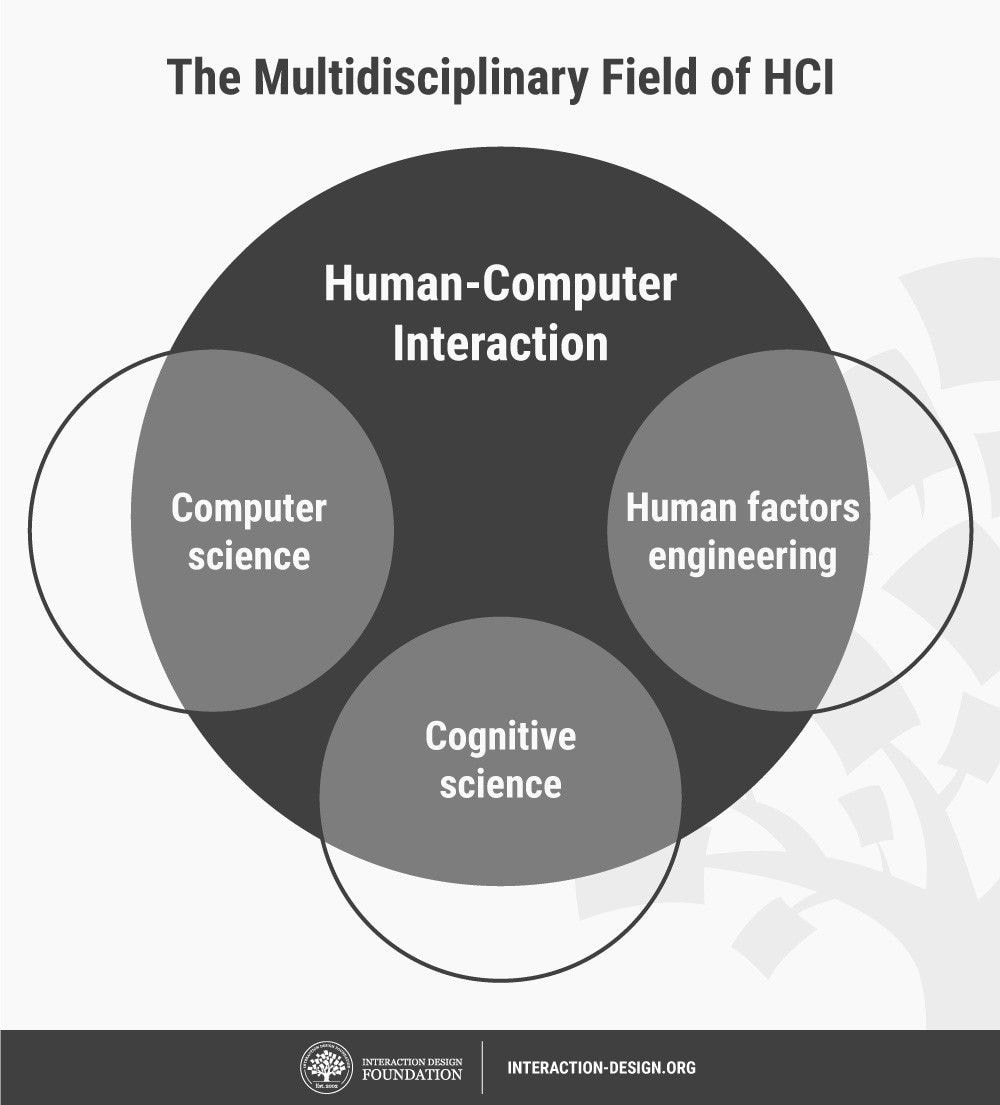

What HCI Is and Why It Is Important? What is HCI or Human-Computer Interaction? It is a cross disciplinary area(e.g. engineering, psychology, ergonomics, design)that deals with the theory, design, implementation, and evaluation of the ways that humans use and interact with computing devices. It is the study of the way in which computer technology influences human work and activities. Interaction –It is a concept to be distinguished from another similar term, interface. In what way do humans and computer interact? The user interacts directly with hardware for the human input and output such as displays, e.g. through a graphical user interface. The user interacts with the computer over this software interface using the given input and output (I/O) hardware. Why is it important? It brings together expertise from computer science, cognitive psychology, behavioral science, and design to understand and facilitate better interactions between users and machines The Rise of HCI HCI surfaced in the 1980s with the advent of personal computing, just as machines such as the Apple Macintosh, IBM PC 5150 and Commodore 64 started turning up in homes and offices in society-changing numbers. For the first time, sophisticated electronic systems were available to general consumers for uses such as word processors, games units and accounting aids. Consequently, as computers were no longer room-sized, expensive tools exclusively built for experts in specialized environments, the need to create human-computer interaction that was also easy and efficient for less experienced users became increasingly vital. From its origins, HCI would expand to incorporate multiple disciplines, such as computer science, cognitive science and human-factors engineering. The term high usability means that the resulting interfaces are easy to use, efficient for the task, ensure safety, and lead to a correct completion of the task. Usable and efficient interaction with the computing device in turn translates to higher productivity. The simple aesthetic appeal of interfaces(while satisfying the need for usability)is now a critical added requirement for commercial success as well.

0 Comments

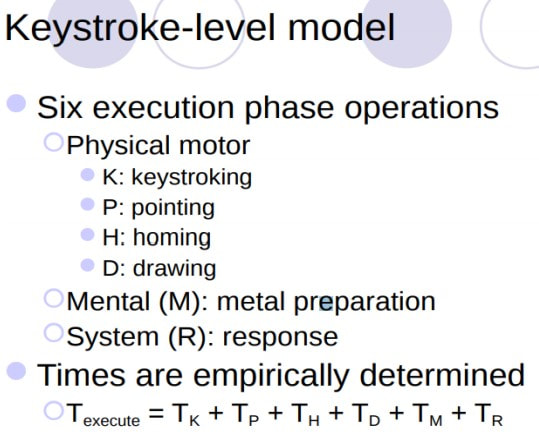

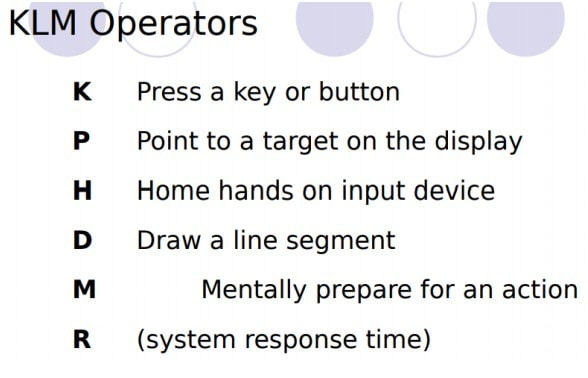

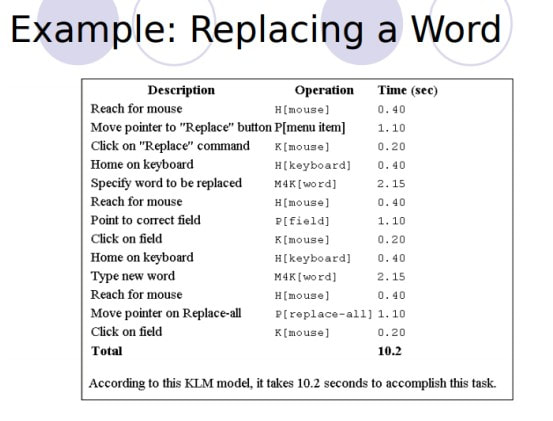

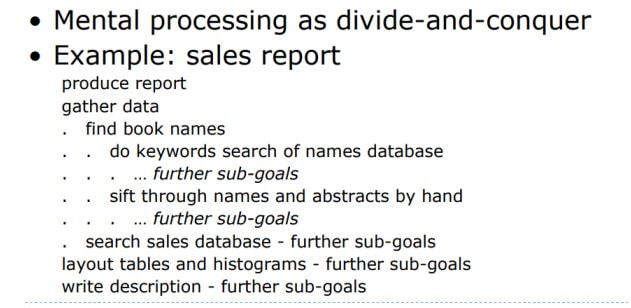

Cognitive Modeling •Cognitive approach to HCI attempts to predict user performance based on a model of cognition •Start with a model of how humans act •Use that model to predict how humans would complete tasks using a particular UI •Provided theoretical foundation of HCI in the 1970s and 1980s Cognitive Theories •KLM (Keystroke-Level Model) - Description of user tasks based on low-level actions (keystrokes,etc.) •GOMS (Goals, Operators, Methods, Selectors) - Higher-level then KLM, with structure and hierarchy •Fitts’ Law - Predicts how long it will take a user to select a target; used for evaluating device input •Model Human Processor (MHP) - Model of human cognition underlying each of these theories

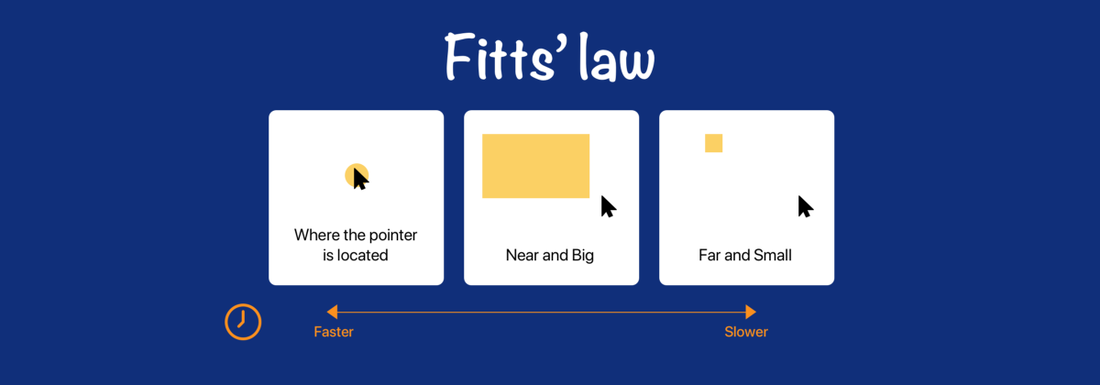

• GOMS (Goals, Operators, Methods, Selectors) - Higher-level then KLM, with structure and hierarchy • Fitts’ Law - Predicts how long it will take a user to select a target; used for evaluating device input - Fitts’ Law states that the amount of time required for a person to move a pointer (e.g., mouse cursor) to a target area is a function of the distance to the target divided by the size of the target. Thus, the longer the distance and the smaller the target’s size, the longer it takes. - Fitts’ law is widely applied in user experience (UX) and user interface (UI) design. For example, this law influenced the convention of making interactive buttons large (especially on finger-operated mobile devices)—smaller buttons are more difficult (and time-consuming) to click. Likewise, the distance between a user’s task/attention area and the task-related button should be kept as short as possible. - The law is applicable to rapid, pointing movements, not continuous motion (e.g., drawing). Such movements typically consist of one large motion component (ballistic movement) followed by fine adjustments to acquire (move over) the target. The law is particularly important in visual interface design— or any interface involving pointing (by finger or mouse, etc.) •Model Human Processor (MHP)

- Model of human cognition underlying each of these theories is a cognitive modeling method developed by Stuart K. Card, Thomas P. Moran, and Allen Newell (1983) used to calculate how long it takes to perform a certain task. - Design tool that is used for creating an effective user interface. It draws an analogy between the processing and storage facilities in a computer system with the perceptual, cognitive, memory and motor activities of a computer user. What is User Interface? The user interface (UI) is the point of human-computer interaction and communication in a device. This can include display screens, keyboards, a mouse and the appearance of a desktop. It is also the way through which a user interacts with an application or a website. User Interface Design User interface (UI) design is the process designers use to build interfaces in software or computerized devices, focusing on looks or style. Designers aim to create interfaces which users find easy to use and pleasurable. UI design refers to graphical user interfaces and other forms—e.g., voice-controlled interfaces. There are four prevalent types of user interface and each has a range of advantages and disadvantages: ▪Command Line Interface ▪Menu-driven Interface ▪Graphical User Interface ▪Touch screen Graphical User Interface Command Line Interface Menu-driven Interface Graphical User Interface Touch screen Graphical User Interface

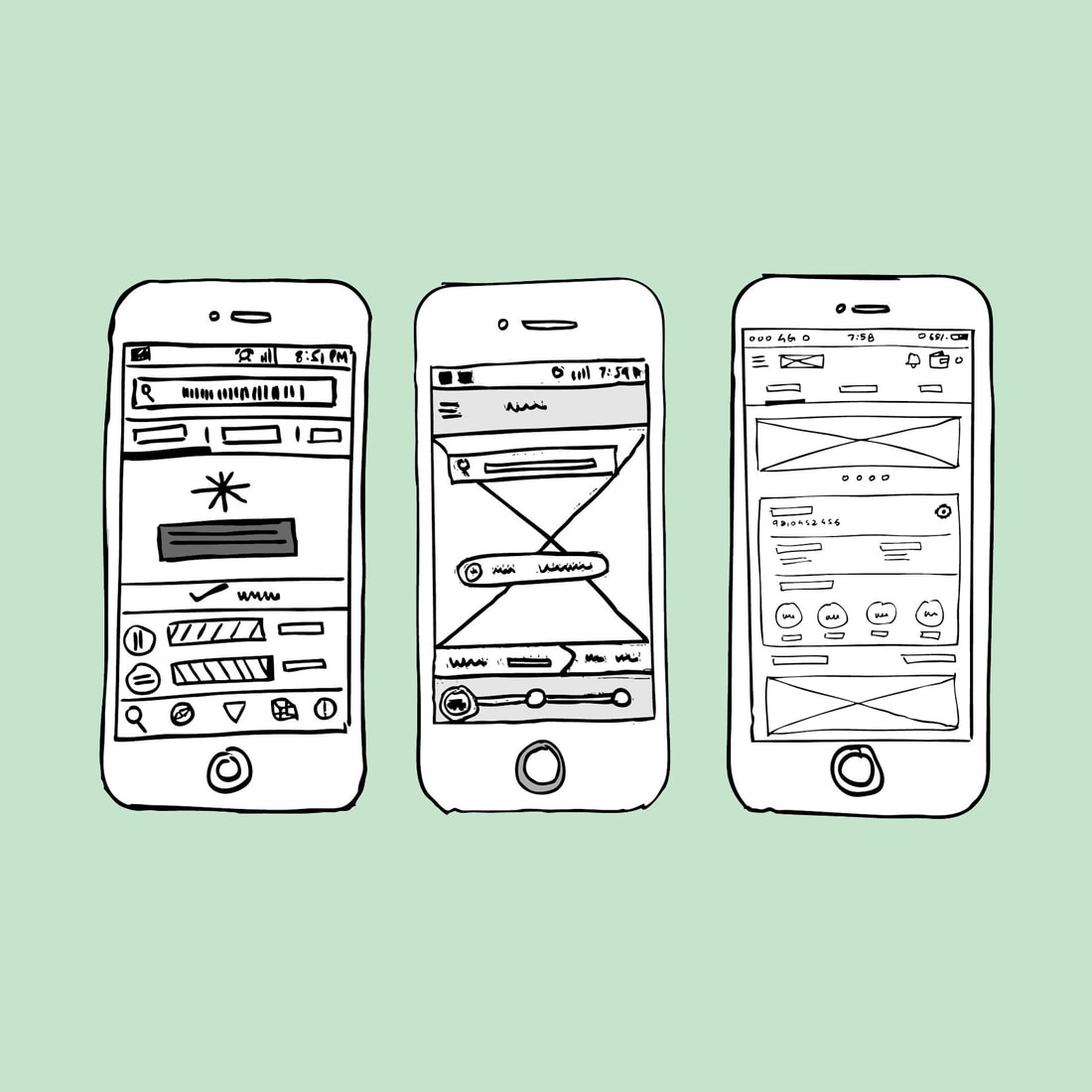

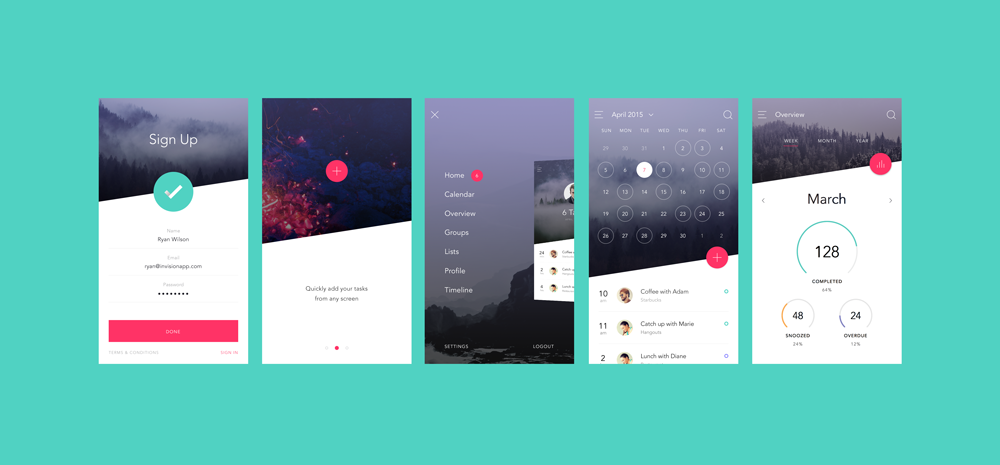

Prototyping is another type of software engineering models that can have a complete range of functionalities of the projected system. In HCI, prototyping is a trial and partial design that helps users in testing design ideas without executing a complete system. Example of a prototype can be Sketches. Sketches of interactive design can later be produced into graphical interface A Medium Fidelity Prototype involves some but not all procedures of the system. E.g., first screen of a GUI. Finally, a Hi Fidelity Prototype simulates all the functionalities of the system in a design. This prototype requires, time, money and work force. Types of Prototypes Low Fidelity Prototypes • Low Fidelity prototypes are best during the early stages. They help to make changes very quickly with little effort. They can even be sketched on a Paper with a pen to take feedback from users on real time basis; and implementing changes in the Prototype with-in few hours or may be minutes. •They are cheap and quick and doesn’t require any advanced skills. • Low Fidelity Prototypes have limited Interactivity and are low on functionality, hence best used to present only the key elements of the most critical Idea. • They are often disposable and Throwaway. • Low Fidelity Prototype makes it easier for the team and Users to understand the product well. However, as you move further into the Development Cycle, it becomes difficult to maintain them for functional evaluations. High Fidelity Prototypes • Closer to the finished product. High Fidelity Prototypes give a real experience of the product. They should be used when multiple iterations have already been done in your prototype taking user feedback. • High Fidelity Prototypes are interactive and functional. • They replicate the Final Product and helps in concrete feedbacks, as it mimics the interactivity that User can have with Actual Final Product. A prototype is an early sample, model, or release of a product built to test a concept or process or to act as a thing to be replicated or learned from. It is a term used in a variety of contexts, including semantics, design, electronics, and software programming. Prototyping is an experimental process where design teams implement ideas into tangible forms from paper to digital. Teams build prototypes of varying degrees of fidelity to capture design concepts and test on users. With prototypes, you can refine and validate your designs so your brand can release the right products. Software prototyping is the activity of creating prototypes of software applications, i.e., incomplete versions of the software program being developed. It is an activity that can occur in software development and is comparable to prototyping as known from other fields, such as mechanical engineering or manufacturing. There are many types of the prototype and here are ten of those types:

1. A film (movie) prototype: Here a prototype is made using video just to show others the idea in a graphical/visual format. 2. Feasibility Prototype: This type of prototype is usually developed to determine the feasibility of various solutions. It is applied to the resolve technical risks attached to the development in terms of performance, compatibility of components etc. 3. Horizontal Prototype: This is the user interface in the form of screenshots, demonstrating the outer layer of the human interface only, such as windows, menus, and screens. The prototype is used to clarify the scope and requirements of the product. 4. Rapid Prototype: The rapid prototyping technique is used to quickly engineer an initial model of a product using a three-dimensional computer-aided design when you want to produce something in a short span. 5. Simulations: Simulation prototype is digitally creating of a physical product to predict the performance of the product in the real world. 6. Storyboard: A storyboard describes a product in a form of a story and demonstrates a typical order in which information needs to be presented. It helps in determining useable sequences for presenting information 7. Vertical Prototype: A vertical prototype is the back end of a product like a database generation to test front end. It used to improve database design, test key components at early stages or showcase a working model, though unfinished, to check the key functions. 8. Wireframe: This is a skeleton a product. Depicted in the form of illustrations or schematics that capture an aspect of design such as an idea, layout, form, architecture or sequence. 9. Animations: These are images drawn and put in a sequence that walks you through the proposed 3D structure of the product/solution. 10. Mock-up: This is with no functionalities, just to get overall visual of the product. It is an unpolished version of the product with no active features. In conclusion, depending on what you are working on you will need to decide which prototype works best for you, often a number of these might need to be used into a working prototype. Keep the interface simple. The best interfaces are almost invisible to the user. They avoid unnecessary elements and are clear in the language they use on labels and in messaging. Create consistency and use common UI elements. By using common elements in your UI, users feel more comfortable and are able to get things done more quickly. It is also important to create patterns in language, layout and design throughout the site to help facilitate efficiency. Once a user learns how to do something, they should be able to transfer that skill to other parts of the site. Be purposeful in page layout. Consider the spatial relationships between items on the page and structure the page based on importance. Careful placement of items can help draw attention to the most important pieces of information and can aid scanning and readability. Strategically use color and texture. You can direct attention toward or redirect attention away from items using colour, light, contrast, and texture to your advantage. Use typography to create hierarchy and clarity. Carefully consider how you use typeface. Different sizes, fonts, and arrangement of the text to help increase scan ability, legibility and readability. Make sure that the system communicates what’s happening. Always inform your users of location, actions, changes in state, or errors. The use of various UI elements to communicate status and, if necessary, next steps can reduce frustration for your user. Think about the defaults. By carefully thinking about and anticipating the goals people bring to your site, you can create defaults that reduce the burden on the user. This becomes particularly important when it comes to form design where you might have an opportunity to have some fields pre-chosen or filled out.

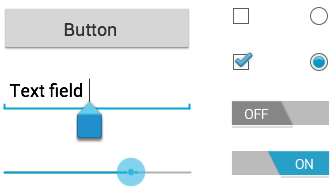

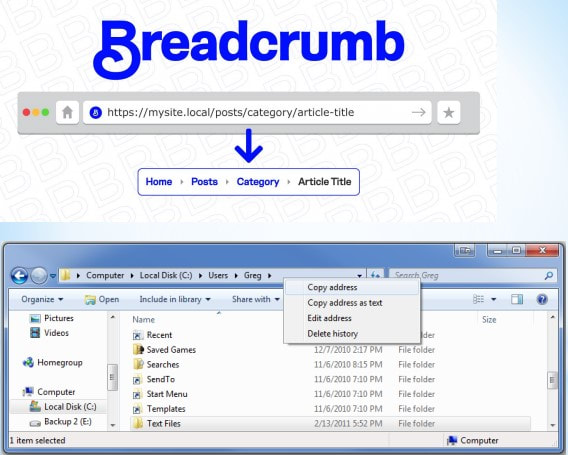

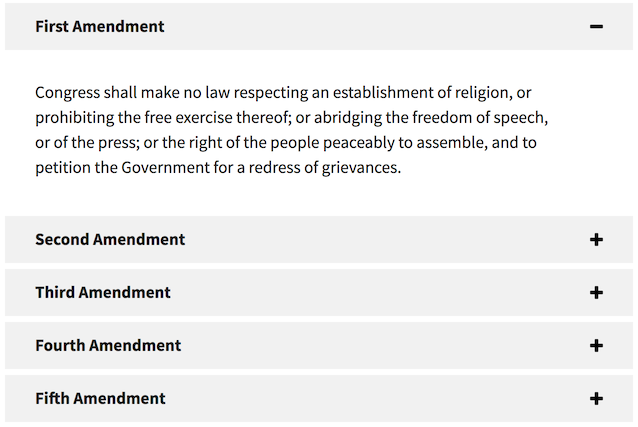

User Interface (UI) Design focuses on anticipating what users might need to do and ensuring that the interface has elements that are easy to access, understand, and use to facilitate those actions. UI brings together concepts from interaction design, visual design, and information architecture. Choosing Interface Elements Interface elements include but are not limited to: Input Controls: buttons, text fields, checkboxes, radio buttons, dropdown lists, list boxes, toggles, date field Navigational Components: breadcrumb, slider, search field, pagination, tags, icons Informational Components: tooltips, icons, progress bar, notifications, message boxes, modal windows Containers: accordion

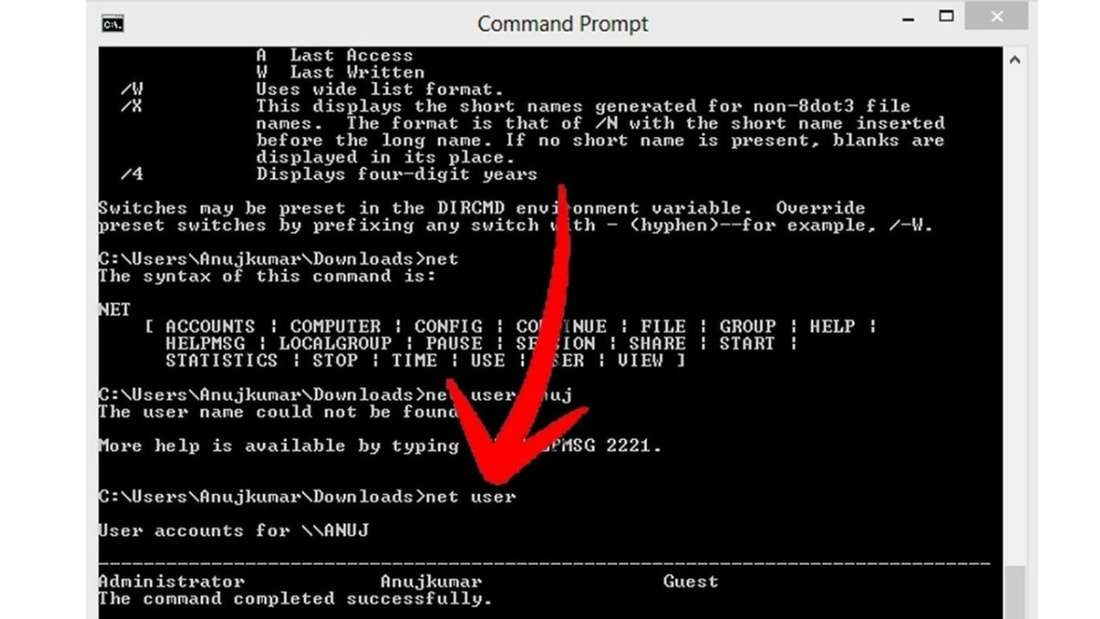

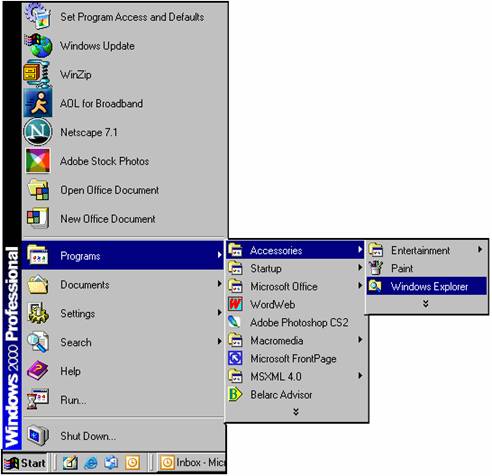

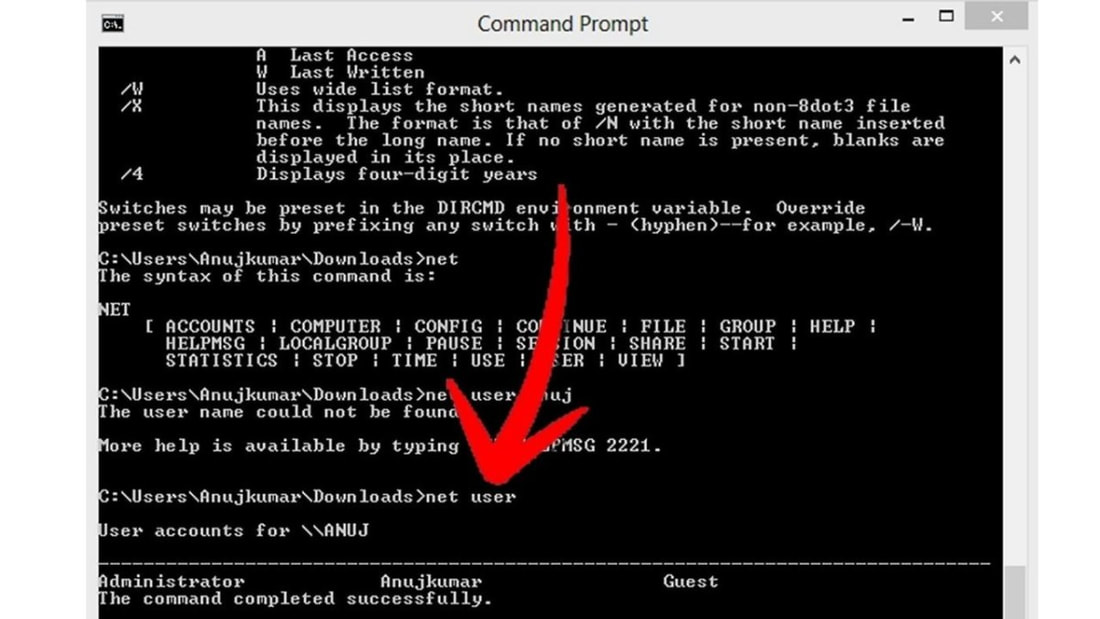

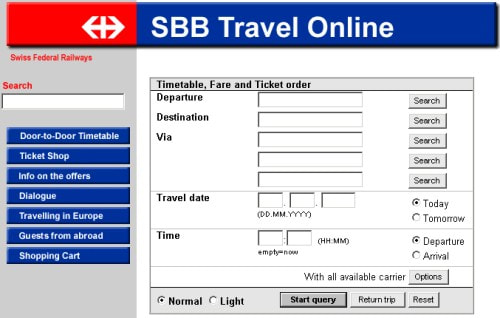

Interaction Styles It refers to all the ways the user can communicate or otherwise interact with the computer system. The concept belongs in the realm of HCI or at least have its roots in the computer medium, usually in the form of a workstation or a desktop computer. There are six different types of interface/interaction styles that might come with an operating system. These are: 1.Graphical User Interfaces (GUI) 2.Command Line Interfaces (CLI) 3.Form-based interfaces 4.Menu-based interfaces 5.Natural language interfaces 6. Direct manipulation 1. Graphical User Interfaces (GUI) Interfaces that are graphical in nature are known either as Graphical User Interfaces (GUI) or WIMP interfaces (Windows, Icons, Menus and Pointer). You would typically expect to find the following in a GUI or WIMP user interface: • A 'window' for each open application. Many windows can be open at the same time but only one window can be active at any one time. There may be some way of indicating which one is active (perhaps by making the bar at the top of the active window bright blue). • Menus and icons. Available functions can be selected in one of two ways, either by using pop-up menus or drop-down menus, or by clicking on 'icons'. An icon is simply a small picture that represents a specific function - clicking on it selects that function. • A pointing device, to make selections. It is typically a mouse, a graphics tablet and pen or a finger on touchscreens. The use of a keyboard to navigate through the application is minimized because it is a relatively time-consuming way of working. 2. Command Line Interface (CLI) Requires a user to type in commands from a list of allowable commands. Suppose you want to back-up a file called donkey.doc to a folder (directory) called animals on your floppy disk. type: C:\> copy donkey.doc a:\animals 3. Forms -Some operating systems are designed for businesses where employees have to enter in lots of information. -The input into the computer is predictable. 4. Menus Some operating systems are designed with a menu-based user interface. Menu-based user interfaces are ideal for situations where the user's IT skills cannot be guaranteed or in situations which require selections to be made from a very wide range of options or in situations which require very fast selection 5. Natural Language Interface (NLI) Natural Language Interface (NLI) is an interface that allows users to interact with the computer using a human language, such as English or Chinese, as opposed to using a computer language, command line interface, or a graphical user interface. Example(s): • Siri - is a virtual assistant that is part of Apple Inc.'s. -Apple’s built-in, voice-controlled personal assistant available for Apple users. The idea is that you talk to her as you would a friend and she aims to help you get things done, whether that be making a dinner reservation or sending a message. Alexa- virtual assistant AI technology developed by Amazon. Cloud-based voice service available on hundreds of millions of devices from Amazon and third-party device manufacturers. Google Assistant - Google Assistant is an artificial intelligence–powered virtual assistant developed by Google that is primarily available on mobile and smart home devices. Cortana- is a virtual assistant developed by Microsoft which uses the Bing search engine to perform tasks such as setting reminders and answering questions for the user. Apple's Siri, Alexa, Google Assistant, and Cortana are natural language interfaces that allows you to interact with your device's operating system using your own spoken language. Natural language interfaces can, however, be difficult to use effectively due to the unpredictable and ambiguous nature of human speech. 6. Direct manipulation It was introduced by Ben Shneiderman. It is an interaction style in which the objects of interest in the UI are visible and can be acted upon via physical, reversible, incremental actions that receive immediate feedback. Graphical user interface (GUI), a computer program that enables a person to communicate with a computer through the use of symbols, visual metaphors, and pointing devices.

Non-graphic user interface is a system that allows the user to communicate with the machine without using any graphic. As mentioned on some answers here, text is one of them. Another one that's getting big is voice interface. An example of a non-graphical application on computers is web servers, they do not use graphical user interfaces, but they are event driven, they wait until a request is received before carrying out what is needed to be done. A heuristic evaluation is a usability inspection method for computer software that helps to identify usability problems in the user interface (UI) design. It specifically involves evaluators examining the interface and judging its compliance with recognized usability principles (the "heuristics"). Heuristic evaluation is a process where experts use rules of thumb to measure the usability of user interfaces in independent walkthroughs and report issues. Evaluators use established heuristics (e.g., Nielsen-Molich's) and reveal insights that can help design teams enhance product usability from early in development. The Nielsen-Molich heuristics state that a system should: Keep users informed about its status appropriately and promptly. Show information in ways users understand from how the real world operates, and in the users' language. Offer users control and let them undo errors easily. User-centered evaluation is understood as evaluation conducted with methods suited for the framework of user-centered design as it is described in ISO 13407 (ISO, 1999).

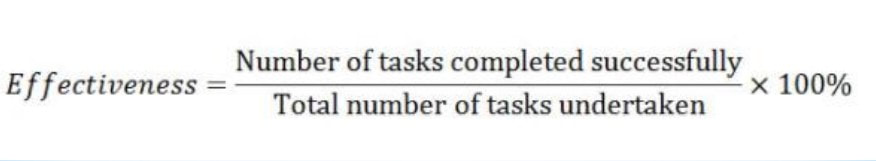

Definition of User-Centered Evaluation User-Centered Evaluation (UCE) is defined as an empirical evaluation obtained by assessing user performance and user attitudes toward a system, by gathering subjective user feedback on effectiveness and satisfaction, quality of work, support and training costs or user health and well-being. Goals of User-Centered Evaluation (UCE) User-centered evaluation (UCE) can serve three goals: • supporting decisions • detecting problems • verifying the quality of a product These functions make UCE a valuable tool for developers of all kinds of systems, because they can justify their efforts, improve upon a system . International Organization for Standardization The International Organization for Standardization is an international standard-setting body composed of representatives from various national standards organizations. Founded on 23 February 1947, the organization develops and publishes worldwide technical, industrial, and commercial standards. ISO 9241 ISO 9241 is a multi-part standard from the International Organization for Standardization covering ergonomics of human-computer interaction. It covers aspect of usability, including hardware, software and usability processes. You could use the standard to design a workstation, evaluate a display, set usability metrics, evaluate a graphical user interface, test out a new keyboard, assess a novel interaction device such as a joystick, check that the working environment is up to scratch, and measure reflections and color on a display screen. It contains checklists to help structure a usability evaluation, examples of how to operationalize and measure usability, and extensive bibliographies. It even has the courage to define usability. What is the purpose of ISO 9241? ISO 9241-11:2018 provides a framework for understanding the concept of usability and applying it to situations where people use interactive systems, and other types of systems (including built environments), and products (including industrial and consumer products) and services (including technical and personal services). The ISO/IEC 9126-4 approach to Usability Metrics -The ISO 9241-11 standard defines usability as “the extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency and satisfaction in a specified context of use”. -Usability is not a single, one-dimensional property but rather a combination of factors. The ISO/IEC 9126-4 Metrics recommends that usability metrics should include: Effectiveness: The accuracy and completeness with which users achieve specified goals Efficiency: The resources expended in relation to the accuracy and completeness with which users achieve goals. Satisfaction: The comfort and acceptability of use. 1. Usability Metrics for Effectiveness – Completion Rate -Number of Errors 2. Usability Metrics for Efficiency Efficiency is measured in terms of task time. that is, the time (in seconds and/or minutes) the participant takes to successfully complete a task. The time taken to complete a task can then be calculated by simply subtracting the start time from the end time Task Time = End Time – Start Time 3. Usability Metrics for Satisfaction

User satisfaction is measured through standardized satisfaction questionnaires which can be administered after each task and/or after the usability test session. 3.1 Task Level Satisfaction After users attempt a task (irrespective of whether they manage to achieve its goal or not), they should immediately be given a questionnaire so as to measure how difficult that task was. Typically consisting of up to 5 questions, these post task questionnaires often take the form of Likert scale ratings and their goal is to provide insight into task difficulty as seen from the participants’ perspective. 3.2 Test Level Satisfaction Test Level Satisfaction is measured by giving a formalized questionnaire to each test participant at the end of the test session. This serves to measure their impression of the overall ease of use of the system being tested. |

|

RSS Feed

RSS Feed